Yesterday I had the privilege of interviewing Sir Michael Atiyah. We talked about mathematical writing, the interplay of abstraction and application, and unified theories of physics. A shorter excerpt, focusing on mathematical exposition, appears on the HLF conference blog.

Update: I’ve posted the audio of the interview here.

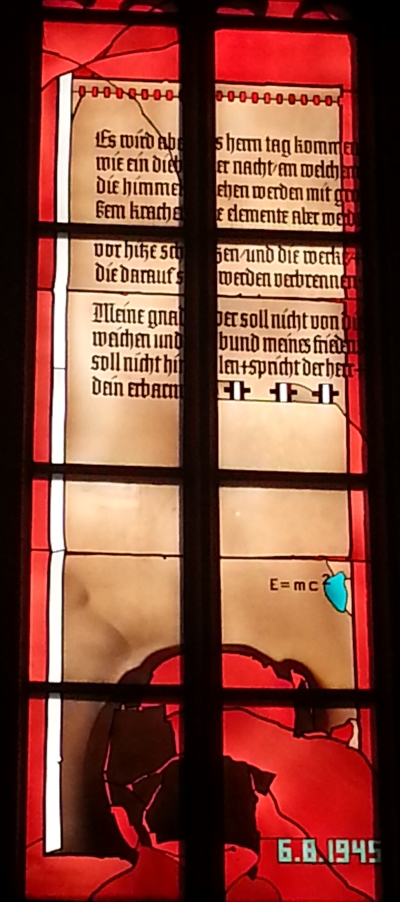

Photo by Bernhard Kreutzer

JC: I ran across your expository papers when I was a student and very much enjoyed reading them. Two things stood out that I still remember. One was your comment about one-handed pottery, that the person creating it might be very proud of what he was able to do with only one hand, but the user of the pottery doesn’t care.

MA: That was my response to the kind of highly technical mathematics that showed you could do something under extremely minimal hypotheses, but who cares? Sometimes its useful, but often its for the benefit of the technicians and not the general user.

JC: The other thing I remember from your papers was your rule that exposition should proceed from the particular to the abstract.

MA: I have strong views on that. Too many people write papers that are very abstract and at the end they may give some examples. It should be the other way around. You should start with understanding the interesting examples and build up to explain what the general phenomena are. This was you progress from initial understanding to more understanding. This is both for expository purposes and for mathematical purposes. It should always guide you.

When someone tells me a general theorem I say that I want an example that is both simple and significant. It’s very easy to give simple examples that are not very interesting or interesting examples that are very difficult. If there isn’t a simple, interesting case, forget it.

One feature of mathematics is its abstraction. You can encompass many things under one heading. We talk about waves. For us a wave is not a water wave, it’s an oscillating function. It’s a very useful concept to extract things out.

Mathematics is built on abstractions. On the other hand, we have to have concrete realizations of them. Your brain has to operate on two levels. It has to have abstract hierarchies, but it also has to have concrete steps you can put your feet on.

Everything useful in mathematics has been devised for a purpose. Even if you don’t know it, the guy who did it first, he knew what he was doing. Banach didn’t just develop Banach spaces for the sake of it. He wanted to put many spaces under one heading. Without knowing the examples, the whole thing is pointless. It is a mistake to focus on the techniques without constantly, not only at the beginning, knowing what the good examples are, where they came from, why they’re done. The abstractions and the examples have to go hand in hand.

You can’t just do particularity. You can’t just pile one theorem on top of another and call it mathematics. You have to have some architecture, some structure. Poincaré said science is not just a collection of facts any more than a house is a collection of bricks. You have to put it together the right way. You can’t just list 101 theorems and say this is mathematics. That is not mathematics. That is categorization. Mathematics is not a dictionary.

JC: I think someone called that stamp collecting or bug collecting.

MA: Yes. Rutherford was very scathing about chemistry. He said there is Schrodinger’s equation, and everything else was cookery.

JC: I think people are more willing to give these examples verbally than in print.

MA: You’re quite right. It is kind of a reaction in recent times. People began to go beyond what they could prove and got a bit woolly and they got a bad reputation, like some of the Italian algebraic geometers. So you are taught to be rigorous, and you learn to be very, very rigorous. And then you think that your papers have to be ultra-rigorous, otherwise the guys behind you will find fault with what you’re saying. The need for rigor, it’s a bit like the people who are afraid of being sued by the lawyers. If you don’t put it down, 100%, someone will sue you! People cover themselves in advance, but the result is an unreadable paper.

But when you talk like this, you’re allowed to lower the level of rigor in order to increase the power of explanation. You can explain things using hand waving, use analogies, leaving out technical details, because you want to get the idea across. But when people write, particularly mathematics, and particularly in the last decades of the last century, people became very formalistic. Papers were rejected if they were not rigorous enough. People were reacting to the loose talk of the past. So we went to the other extreme. And most papers aren’t read. That’s a theorem! Someone said the average number of readers of a paper is one, and that’s the author.

JC: And with multiple authors, maybe it’s a fraction.

MA: That’s right. I used to tell people, make your introduction understandable to a general mathematician. Don’t get into the technicalities there. Say you’ll deal with them later. Don’t just say “Let X be a space” and jump into the details but that’s what people do. So you’re right. But people know when they give a lecture to behave differently, they can use all the tools of hand-waving, literally! [waves hands], to get idea across.

Some people write so well it’s as good as a lecture. Hermann Weyl is my great model. He used to write beautiful literature. Reading it was a joy because he put a lot of thought into it. Hermann Weyl wrote a book called The Classical Groups, very famous book, and at the same time a book appeared by Chevalley called Lie Groups. Chevalley’s book was compact, all the theorems are there, very precise, Bourbaki-style definitions. If you want to learn about it, it’s all there. Hermann Weyl’s book was the other way around. It is discursive, expansive, it’s a bigger book, it tells you much more than need to know. It’s the kind of book you read ten times. Chevalley’s book you read once. You go back to these other books. They’re full of throw-away lines, insights that are not fully relevant for going from A to B. He’s trying to give you a vision for C. Not many people write like that. He was an exception.

In the introduction he explains that he’s German, he’s writing in a foreign language, he apologizes saying he is writing in a language that was “not sung by the gods at my cradle.” You can’t get any more poetic than that. I’m a great admirer of his style, of his exposition and his mathematics. They go together.

JC: I ran across an old paper by Halmos recently where he refers to your index theorem as a pinnacle of abstraction, and I thought about the comparison with Shinichi Mochizuki’s claimed proof of the abc conjecture. The index theorem rests on a large number of abstractions, yet these are widely shared abstractions. Mochizuki’s proof also sits on top of many abstractions, but nobody understands it.

MA: These problems have a long history. The past is part of your common heritage. They generalize classical theorems. They go step by step through abstractions. Everybody knows what they’re for. So there’s a big pyramid. The base is very firm. The purpose of abstraction is that you can go back down to earth and apply it here or apply it there. You don’t just disappear in the clouds. You walk along the ridge and go down to this valley or that.

JC: Maybe the proof of the abc conjecture is more of a flag pole than a pyramid.

MA: And when you get to the top of a flag pole, what do you do? You could be like the hermit saints who would sit on a pillar. …

JC: I saw a video recently of a talk you gave a couple years ago in which you described yourself as a “mystic,” someone hoping for a unification of several diverse theories of physics.

MA: That’s right. I was saying that there are these different schools of thought, each with a prophet leading them. I wanted to say that I didn’t follow any one of them, that you can search for the truth through many different ways. The mystic thinks there’s a little bit of truth in everybody’s line. I think every interesting line has something to be said for it.

There are the string theorists, that’s the biggest school, and people like Roger Penrose, Alain Connes, … They all have strong ideas, and they attract a certain group of followers. These are serious people, there’s serious reason to believe what they’re doing, and there’s something in it. Bridges have been built between these theories. They’ve found unexpected links between them. So that’s already a bit of convergence. So I’m hoping there will be more convergence, using the best of each theory.

It would be sad if we’ve seen the last of the visions of the prophets. It would be sad if new fundamental insights were no more. There’s no reason it should stop. At the end of the 19th century people thought physics was finished. We know absolutely everything, but at the beginning of the 20th century it exploded. There’s no reason not to think there will be further revolutionary ideas emerging. Any day, any time, anywhere.

I’m an optimist. I believe in new ideas, in progress. It’s faith. I’ve recently been thinking about faith. If you’re a religious person, which I’m not, you believe God created the universe. That’s why it works, and you’re trying to understand God’s works. There are many scientists who work in that framework. Scientists, outside of religion, have their own faith. They believe the universe is rational. They’re trying to find the laws of nature. But why are there laws? That’s the article of faith for scientists. It’s not rational. It’s useful. It’s practical. There’s evidence in its favor: the sun does rise every day. But nevertheless, at the end of the day, it’s an article of faith.

I gave a talk about Pythagoras. Bertrand Russell said that Pythagoras was probably the most influential philosopher who ever existed, because he was the first person to emphasize two things, the role of numbers in general, and the role of numbers in music in particular. He was so struck by this that he believed numbers were the base of the universe. He was the first person to articulate the view that the universe is rational, built on rational principles.

JC: What do you think of category theory? Do you think it plays a role in this grand unification?

MA: Well, I’m a conservative in many ways. As you get older you get more conservative. And I don’t believe in things unless I’m convinced of their utility. Ordinary categories are a useful language. But it’s become now more formal. There are things called 2-categories, 3-categories, building categories on categories. I’m now partially converted. I think it may be necessary. Algebra was an abstraction when it first came along. Then you get into categories and higher levels of abstraction. So I’m broad minded about it, but I’d like to be persuaded.