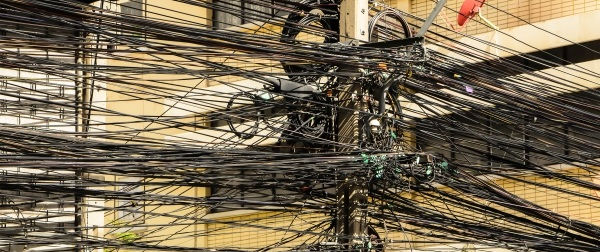

As systems get larger and more complex, we need new tools to test whether these systems are correctly specified and implemented. These tools may not be new per se, but they may be applied with new urgency.

Dimensional analysis is a well-established method of error detection. Simply checking that you’re not doing something like adding things that aren’t meaningful to add is surprisingly useful for detecting errors. For example, if you’re computing an area, does your result have units of area? This seems like a ridiculously basic question to ask, and yet it is more effective than it would seem.

Type theory and category theory are extensions of the idea of dimensional analysis. Simply asking of a function “Exactly what kind of thing does it take in? And what sort of thing does it put out?” is a powerful way to find errors. And in practice it may be hard to get answers to these questions. When you’re working with a system that wasn’t designed to make such things explicit and clear, it may be that nobody knows for certain, or people believe they know but have incompatible ideas. Type theory and category theory can do much more than nudge you to define functions well, but even that much is a good start.

Specifying types is more powerful than it seems. With dependent types, you can incorporate so much information in the type system that showing that an instance of a type exists is equivalent to proving a theorem. This is a consequence of the Curry-Howard correspondence (“Propositions as types”) and is the basis for proof assistants like Coq.

We’d like to suggest the term Big Logic for the application of logic on a large scale, using logic to prove properties of systems that are too complex for a typical human mind to thoroughly understand. It’s well known that systems have gotten larger, more connected, and more complex. It’s not as well known how much the tools of Big Logic have improved, making formal verification practical for more projects than it used to be.

Trusted consultants to some of the world’s leading companies