Ramanujan posed the following puzzle to Journal of Indian Mathematical Society. What is the value of the following?

He waited over six months, and when nobody replied he gave his solution. The result is simply 3.

Now suppose you didn’t know an algebraic proof and wanted to evaluate the expression numerically. The code below will evaluate Ramanujan’s expression down to n levels.

def f(n):

t = 1.0

for k in range(n, 1, -1):

t = sqrt(k*t + 1)

return t

(The range function above produces the sequence n, n-1, … 2.)

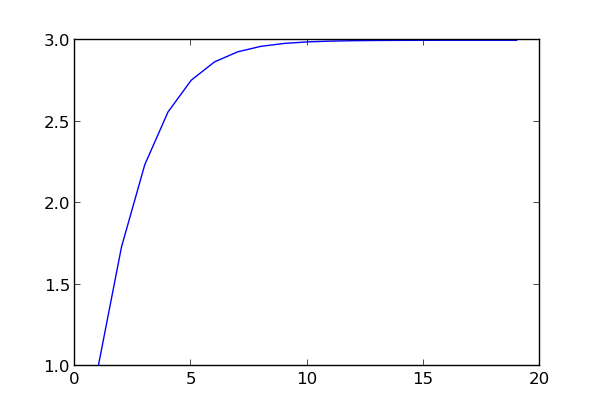

The sequence of f(n) values converges rapidly to 3 as the plot below shows.

The code above evaluates the expression from the inside out, and so you need to know where you’re going to stop before you start. I don’t see how to write a different algorithm that would let you compute f(n+1) taking advantage of having computed f(n). Do you? Can you think of a way to evaluate f(1), f(2), f(3), … f(N) in less than O(N2) time? Will your algorithm if some or all of the numbers in Ramanujan’s expression change?

Using known result and trivial calculations, we can get value of any inner radical:

sqrt(1 + 2 sqrt(1 + 4…)) = 3

sqrt(1 + 3 sqrt(1 + 4…)) = 4

sqrt(1 + 4 sqrt(1 + 5…)) = 5

sqrt(1 + 5 sqrt(1 + 6…)) = 6

…

That’s rather interesting itself too

Denoting f(n) = sqrt(1+(n+1)*f(n+1)), you come to the equation f(n+1) = (f(n)^2 – 1)/(n+1) (1)

Asymptotically you have f(n)^2 ~ (n+1)f(n+1). You can assume the form of f(n) = a*n + b, and injecting that into (1), you have n^2*(a^2 – a) + n*(2*a*b – 2*a – b) + (b^2 – 1 – a – b) = 0 for all n, which means : a = 1, b = 2. So f(n) = n + 2, i.e. f(1) = 3, and the rest follows.

If you take n steps to calculate f(n) it is already O(N), what sense makes to ask “Can you think of a way to evaluate f(1), f(2), f(3), … f(N) in less than O(N^2) time?” ?

Fran: Yes, if you use n steps to calculate each f(n), then evaluating f(1) … f(N) takes N(N+1)/2 steps. The question is whether you can reuse some of your work in calculating one of the values in order to less work in calculating another value.

For example, suppose we were talking about partial sums. Then calculating each f(n) separately takes n steps, but you could find all partial sums up to the Nth in the same time that it takes to calculate the Nth partial sum alone just by saving work you’re doing anyway.

I think I might have a solution to your puzzle. Strategy is to map out the intermediates in a tree which when reduced has each result stored in its leaves and then flatten. Here’s the gist:

https://gist.github.com/prakatmac/6555030

John: Oh, I see what you mean.

Pramukta: I’d say you are not accounting for the steps in your “for r in resp” loop. If you do you still have O(N^2). Nice try though :)

double nested_radical(int n)

{

double ri ;

int i;

i = n;

ri = sqrt(1+(i-1)*sqrt(i+1));

for (i=n-1; i > 1 ; –i)

{

ri = sqrt(1+i*ri) ;

}

return ri;

}

double ramanujan(int n)

{

double r ;

int i;

r = n;

for(i=n-1; i >= 2; –i) r = (i-1)*sqrt(1+r);

return r;

/*

rn = n

rn-1 = (n-1) sqrt(1+rn)

rn-2 = (n-2) sqrt(1+rn-1)

rn-3 = (n-3) sqrt(1+rn-2)

…

r5 = 5 sqrt(1+r6)

r4 = 4 sqrt(1+r5)

r3 = 3 sqrt(1+r4)

r2 = 2 sqrt(1+r3)

r1 = sqrt(1+r2)

*/

}

If you let g(x) = sqrt(1+x), then the quantity to be evaluated is f(x) = g( 2g( 3g( … ))). Is there some slick theorem related to composition of functions that gets you to f(x) = 3 (…and does that really hold for all x > -1)?

I don’t think there is a way to compute from the outside in without doing the algebra.

x^2 = 1 + (x^2-1)

x^2 = 1 + (x-1)(x+1)

x = sqrt(1 + (x-1)(x+1))

x+1 = sqrt(1 + x(x+2))

x+2 = sqrt(1 + (x+1)(x+3))

…

So you can see if you keep expanding the last term you get the proposed nested radical where x-1=2, or x=3.

That is awesomely elegant, Derek. Thanks.

Fran: Ah yes, I see it now. You are absolutely right.

Y= √(1+(1+1)√(1+(1+1+1)√(….)))

1s increase in each next bracket

Open the brackets:

Y=√(1+(√(√x+√x))+(√(√x+√x) ))

X=√(√x+√x))+(√(√x+√x)

2√2x=x

X2=8x

X=8

Y=√(1+8)

Y=3

I have no proficiency in English. Could you tell me if anyone has found a solution by deductive way? I found a possible solution through mathematical proof. But now there demonstration, the challenge practically ceases to exist. someone could answer my question?

Thanks for the reply. Perhaps with the French or the Indians themselves I can maintain a true dialogue!!

Ramanujan is not human, he is a beast.

I just want to understand how Ramanujan held its deduction. In mathematics , as we all know , resolutions are not validated by computational induction . I think I have an outcome ( achieved by myself ) using the deductive method . I wonder if my deduction is near or far from the deductive process created by Ramanujan , which was undoubtedly a brilliant mathematician .

Lênio, I suspect that Ramanujan arrived at his result the way Derek illustrated above, when playing around with the fun fact that (y-1)(y+1) = y^2 – 1.

1. Note that (y-1)(y+1) = y^2 – 1, or equivalently

y^2 = 1 + (y-1)(y+1)

2. Take the square root of both sides to get

y = sqrt(1 + (y-1)(y+1))

3. Substitute y=x, y=x+1, y=x+2, etc. into this identity to get:

x = sqrt(1 + (x-1)(x+1))

x+1 = sqrt(1 + x(x+2))

x+2 = sqrt(1 + (x+1)(x+3))

…

4. Use these identities to expand the last term inside the radical repeatedly:

x = sqrt(1 + (x-1)(x+1))

= sqrt(1 + (x-1)sqrt(1 + x(x+2)))

= sqrt(1 + (x-1)sqrt(1 + x*sqrt(1 + (x+1)(x+3))))

= …

5. Let x = 3:

3 = sqrt(1 + 2*sqrt(1 + 3*sqrt(1 + 4*sqrt(1 + …))))

Et voilà

I found a discussion of the problem and the solution as it was presented:

http://zariski.files.wordpress.com/2010/05/sr_nroots.pdf

The approach is broadly similar, though I should hasten to add it includes an interesting generalization. I swear I only found it today. :-)

Thanks, Derek and Dave!

Let f(n) = n√1+(n+1)√1+(n+2)√1+…..

Then (f(n)÷n)² -1=f(n+1)

ie f(n)²=f(n+1)×n²+n²

ie f(n)²=(f(n+1)+1)×n² — (1)

(Here I make a hunch that f(n) be a polynomial in n)

Let degree of f(n) be m , then by (1) 2m=m+2 ⇒ m=2 .

Assume f(n) = an²+bn+c

Substitute in (1) . Equate the coefficients . you get a=1,b=2,c=0 .

ie f(n)=n(n+2) .

Therefore f(1)=3 .