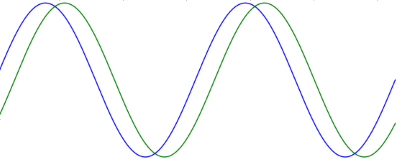

What is the correlation of two sine waves that differ in phase? The result itself is interesting, and the calculation along the way shows tricks to avoid calculating integrals.

The correlation of two periodic signals, f and g, is

where the integral is over a period of the two functions. For functions known at discrete points this would be a sum rather than an integral, but in this case we have continuous signals so we integrate.

In our case the two functions are f(t) = sin(t) and g(t) = sin(t + φ) and the integrals are over [0, 2π]. Both functions have average value 0, so the μ terms go away.

We use a trig identity to expand the numerator:

In our case θ1 = t and θ2 = t + φ and so the numerator becomes

The first part of the integral is integrating a constant (with respect to t) and so becomes the constant times the length of the integration range. The second part of the integral is zero because it is integrating a cosine over two periods.

Now for the denominator. Over a full period, sin2(t) and cos2(t) take on the same values, just shifted. So the integral of sin2(t) is half the integral of sin2(t) + cos2(t) = 1. Therefore

and the same argument shows that the integral of sin2(t + φ) is also π. So our correlation is simply cos φ: the correlation of two out-of-phase sine waves is the cosine of their phase difference. It may be a little surprising that it works out to be so simple, but the result makes sense. When φ = 0, or any multiple of 2π, the waves are identical and so the correlation should be 1. When φ = π, or an odd multiple of π, the two waves are perfectly out of phase, and so the correlation should be −1. In between these extremes the correlation oscillates, in fact it is a cosine.