This post gives an algorithm based on the arithmetic-geometric mean that rapidly converges to pi. I’ll use it to illustrate multiple precision arithmetic using Python’s mpmath module.

Given two non-negative numbers a and b, their arithmetic mean is (a + b)/2 and their geometric mean is √(ab). Suppose you start with two non-negative numbers and take their arithmetic mean and geometric mean. Then take the arithmetic and geometric mean of the results. Keep doing this repeatedly, and the result converges to what is called the arithmetic-geometric mean (AGM).

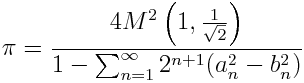

There is a formula for calculating pi based on the AGM that goes back to Gauss.

Here M(a, b) is the AGM of a and b, and an and bn are the values after n steps of the iteration.

The process defining the AGM converges quickly, and so the formula is practical for computing pi. I found it in a paper from 1988, and at that time the formula had been used to compute pi to over 29 million decimal places. In the code below, we compute pi to 997 decimal places in only 10 iterations.

from mpmath import mp, sqrt, mpf, pi, log10, fabs

# carry out calculations to 1000 decimal places

decimal_places = 1000

mp.dps = decimal_places

epsilon = 1/mpf(10**decimal_places)

# set a = 1 and b = 1/sqrt(2) as multi-precision numbers

a = mpf(1)

b = 1/sqrt(mpf(2))

diff = a - b

series = mpf(0)

n = 0

while diff > epsilon:

n += 1

arith = (a + b)/2

geom = sqrt(a*b)

a, b = arith, geom

series += 2**(n+1) * (a*a - b*b)

diff = a - b

# a and b have converged to the AGM

my_pi = 4*a*a/(1 - series)

error = fabs(pi - my_pi)

decimal_places = int(-log10(error))

print "Number of steps used: %d" % n

print "Number of correct decimal places: %d" % decimal_places

The code can be used to calculate pi out further. The number of correct decimal places roughly doubles with each iteration. For example, computing pi to 10,000 places takes only 3 more iterations.

Reference: “Gauss, Landen, Ramanujan, the Arithmetic-Geometric Mean, Ellipses, pi, and the Ladies Diary” by Gert Almevist and Bruce Berndt. Available here.

what about the computation’s stability characteristics? in particular, if the summation is close to 1, or a_i close to b_i ?

How does your level of precision of sqrt(2) affect your level of precision of your result?

Steven: All calculations are carried out to the same precision, in this case 1000 decimal places. I suppose you’re asking how the square root of 2 accuracy propagates throughout the rest of the calculation since it is computed first. I don’t have a direct answer to that, but the final result of carrying all calculations out to 1000 places is that 3 decimal places are lost.

I don’t know just where those decimal places are lost. It would be interesting to look at this computation in more detail and see which steps contribute the most to the error.

It seems that about the same number of decimal places are lost as the precision varies. For example, when I reran the calculation with 20,000 decimal precision, I still lose 3 decimal place, i.e. the result is correct to 19,997 places. I suppose the precision loss depends on the number of iterations, not the precision. Using 20,000 decimal places only required 14 iterations, only 4 more than using 1,000 decimal places.

When I go up to 100,000 decimal places, it takes 17 iterations, and I lose 5 decimal places of precision. This is more evidence that the number of decimal places lost increases very slowly as the working precision increases.

On a hunch that the expression

(a*a - b*b)was contributing to loss of precision, I eventually discovered that the following version of the loop gives full precision:while diff > epsilon:

series += 2**n * diff**2

n += 1

arith = (a + b)/2

geom = sqrt(a*b)

a, b = arith, geom

diff = a - b

This comes from substituting

(a + b)/2foraandsqrt(a*b)forbin the expression(a*a - b*b), so that each term in the series is based on the previous values ofaandb.Loop is done while diff (=a-b) > epsilon.

Final error is pi – 4*a*a/(1 – series), not diff (=a-b).

Having come to this website by chance after attempting a calculation of my own using the Borwein quartic convergence AGM algorithm, I thought it might be of interest for you to know that in my implementation, I found a striking resemblance between your error in the decimals and my own – having noted almost exactly the same results with my version.

The book by Borwein & Borwein, Pi and the AGM (Canadian Mathematical Society Series of Monographs and Advanced Texts, Wiley-Interscience Publication, 1987) contains another algorithm to compute PI using arithmetic geometric means, given in the same chapter as the one you use. Algorithm 2.1 given on page 47, is slightly different. I implemented it and studied precisely the propagation of errors during computations. It turns out that the errors are proportional to the number of iterations, with a formula of the form:

e = 21 * n + 2 times ulp

Where ulp stands for “unit in the last place”, the difference between the two closest numbers you can represent. As soon as n is larger than 5, but until n reaches 22, you know that the error e is not more than 500, so this explains why three or four extra digits are required for a wide range of precisions (20 is enough for one million digit precision).

Of course, the algorithm I studied is not exactly the same as yours, but I expect them to be quite quite close in behavior. If you want to know more about my experience, you may want to look at the following article published by ACM:

http://dl.acm.org/citation.cfm?doid=2676724.2693172

A preprint is also available for free at the following address.

https://hal.inria.fr/hal-01074927

Accidentally, all mathematical proofs about this algorithm were verified using a proof system called Coq.

This is very nice algorithm, but I use to change it (slightly) ….

Try this: instead of calculating “diff” before loop as “diff=a-b” calculate it as “diff=b” (so it is sqrt(1/2)); inside loop: first calculate “diff=diff*diff/(4*a)” and after this “series=2**(n+1)*diff*diff”; “my_pi” is still calculated, after loop, as “my_pi=4*a*a/(1-series)”; that’s all …

Playing with the calculatlon of the AGM of numerical constants,

I found a close connection between Phi (1/2*(1+sqrt(5)) and Pi :

AGM(Phi**Pi,1/Phi**Pi) ~ Phi

(difference is only 0.2%)