From Nick Patterson’s interview on Talking Machines:

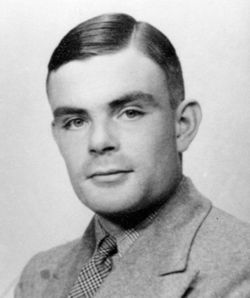

GCHQ in the ’70s, we thought of ourselves as completely Bayesian statisticians. All our data analysis was completely Bayesian, and that was a direct inheritance from Alan Turing. I’m not sure this has ever really been published, but Turing, almost as a sideline during his cryptoanalytic work, reinvented Bayesian statistics for himself. The work against Enigma and other German ciphers was fully Bayesian. …

Bayesian statistics was an extreme minority discipline in the ’70s. In academia, I only really know of two people who were working majorly in the field, Jimmy Savage … in the States and Dennis Lindley in Britain. And they were regarded as fringe figures in the statistics community. It’s extremely different now. The reason is that Bayesian statistics works. So eventually truth will out. There are many, many problems where Bayesian methods are obviously the right thing to do. But in the ’70s we understood that already in Britain in the classified environment.

GCHQ finally declassified two of Turing’s Bayesian manuals in 2012

http://www.bbc.co.uk/news/technology-17771962

See also : https://arxiv.org/pdf/1505.04714.pdf , which includes the line: “Nearly all applications of probability to cryptography depend on the factor

principle (or Bayes’ Theorem). “

You might enjoy sections 18.3 and 18.4 from the book “Information Theory, Inference, and Learning Algorithms”, by David J.C. MacKay, where the author talks a bit about the process at Bletchley, and the ‘ban’ unit, which measures changes in (log_10) probability, named for the village of Banbury.

Looks like RobertM’s arxiv.org link contains the original paper my reference used and which has a section titled ‘Decibanage’.

John:

You might be interested in this article, “‘Not only defended but also applied’: The perceived absurdity of Bayesian inference,” by Christian Robert and myself, and its followup, “The anti-Bayesian moment and its passing.”

Hate to be a picker of nits, but is this quoted sentence correct “So eventually truth will out.”