I ran across a GitHub repo today that features an amusing hack using the sign bit of NaNs for unintended purposes. This is an example of how IEEE floating point numbers have a lot of leftover space devoted to NaNs and infinities. However, relative to the enormous number of valid 64-bit floating point numbers, this waste is negligible.

But when you scale down to low-precision floating point numbers, the overhead of the strange corners of IEEE floating point becomes significant. Interest in low-precision floating point comes from wanting to pack more numbers into memory at the same time when you don’t need much precision. Floating point numbers have long come in 64 bit and 32 bit varieties, but now there are 16 bit and even 8 bit versions.

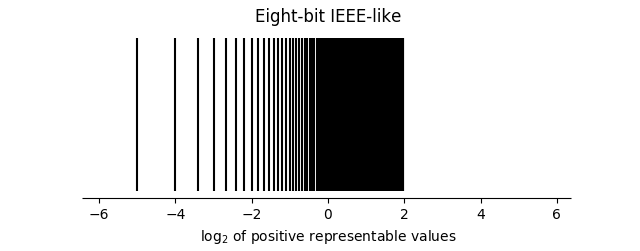

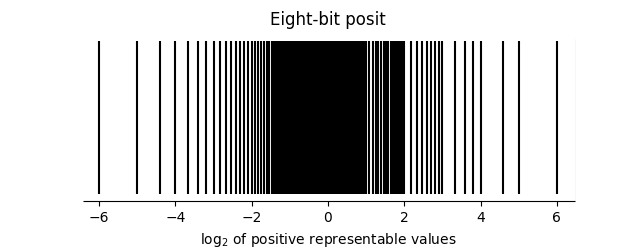

There are advantages to using completely new floating point formats for low precision numbers rather than scaling down the venerable IEEE format. Posit numbers have only one special number, a point at infinity. Every other bit pattern corresponds to a real number. Posits are also more usefully distributed, as illustrated in the image below, taken from here.

The statistical analysis software SAS spends a few values on coded missing values. Useful in applications where you might want to distinguish why something is missing: invalid answer, blank, 0/0, etc. I don’t think it uses IEEE floats, since it actually predates IEEE 754: I believe it assigned the largest 50-something negative numbers to missing values. In theory you could use the payload of a NaN for the same thing, although I’m not aware of anything that uses it that way.

Many computer processors assume that floating-point operands are normalized and will indulge in all kinds of undocumented behavior if they are not. DEC’s assembler for the PDP-10 took advantage of this to set up the parameters for a binary search of the symbol table using a single floating-point instruction, thereby speeding up the most important bottleneck in the software. The operand and the result of the instruction were nonsense in terms of its documented purpose, but gave as a side effect the powers of two next below and next above the number of entries in the table, which was exactly what was needed.

Unless I’m misremembering my IEEE FP encodings, the “leftover space” is basically all NaN. There are just 2 bit patterns for infinity (+/-).