The solution to a differential equation is called oscillatory if its set of zeros is unbounded. This does not necessarily mean that the solution is periodic, but that it crosses the horizontal axis infinitely often.

Fowler [1] studied the following differential equation demonstrates both oscillatory and nonoscillatory behavior.

x” + tσ |x|γ sgn x = 0

He proved that

- If σ + 2 ≥ 0 then all solutions oscillate.

- If σ + (γ + 3)/2 < 0 then no solutions oscillate.

- If neither (1) nor (2) holds then some solutions oscillate and some do not.

The edge case is σ = -2 and γ = 1. This satisfies condition (1) above. A slight decrease in σ will push the equation into condition (2). And a simultaneous decrease in σ and increase in γ can put it in condition (3).

It turns out that the edge case can be solved in closed form. The equation is linear because γ = 1 and solutions are given by

c √t sin(φ + √3 log(t) / 2).

The solutions oscillate because the argument to sine is an unbounded function of t, and so it runs across multiples of π infinitely often. The distance between zero crossings increases exponentially with time because the logarithms of the crossings are evenly spaced.

This post took a different turn after I started writing it. My original intention was to solve the differential equation numerically and say “See: when you change the parameters slightly you get what the theorem says.” But that didn’t work out.

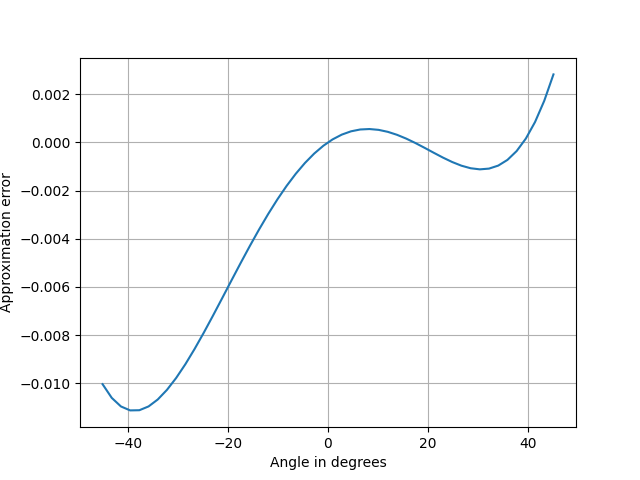

First I tried numerically computing the solution above with σ = −2 and γ = 1, but I didn’t see oscillations. I tried making σ a little larger, but still didn’t see oscillations. When I increased σ more I could see the oscillations.

I was able to go back and see the oscillations with σ = −2 and γ = 1 by tweaking the parameters in my ODE solver. It’s best practice to tweak your parameters even if everything looks right, just to make sure your solution is robust to algorithm changes. Maybe I would have done that, but since I already knew the analytic solution, I knew to tweak the parameters until I saw the right behavior.

Without some theory as a guide, it would be difficult to determine from numerical solutions alone whether a solution oscillates. If you see oscillations, then they’re probably real (unless your numerical method is unstable), but if you don’t see oscillations, how do you know you won’t see them if you look further out? How much further out should you look? In a practical application, the context of your problem will tell you how far out to look.

My intention was to recommend the equation above as an illustration for an introductory DE class because it demonstrates the important fact that small changes to an equation can qualitatively change the behavior of the solutions. This is especially true for nonlinear DEs, which the above equation is when γ ≠ 1. It could still be used for that purpose, but it would make a better demonstration than homework exercise. The instructor could tune the DE solver to make sure the solutions are qualitatively correct.

I recommend the equation as an illustration, but for a course covering numerical solutions to DEs. It illustrates a different point than I first intended, namely the potentially finicky behavior of software for solving differential equations.

There are a couple morals to this story. One is that numerical methods have not eliminated the need for theory. The ideal is to combine analytic and numerical methods. Analytic methods point out qualitative behavior that might be hard to discover numerically. And analytic methods are not likely to produce closed-form solutions for nonlinear DEs.

Another moral is that it’s best to twiddle the parameters to your numerical method to make sure the solution doesn’t qualitatively change. If you’ve adequately computed a solution, computing it again with smaller integration steps shouldn’t make much difference. But if you do see a substantial difference, your first solution probably wasn’t as good as you thought.

Related posts

[1] R. H. Fowler. Further studies of Emden’s and similar differential equations. Quarterly Journal of Mathematics. 2 (1931), pp. 259–288.