Thanks to the quadratic equation, it’s easy to tell whether a quadratic equation has a double root. The equation

has a double root if and only if the discriminant

is zero.

The discriminant of a cubic is much less known, and the analogs for higher order polynomials are unheard of. There is a discriminant for polynomials of all degrees, though the complexity of the discriminant grows quickly with the degree of the polynomial.

This post will derive the discriminant of a cubic and a quartic.

Resultants

The resultant of a pair of polynomials is zero if and only if the two polynomials have a common root. Resultants have come up in a couple previous posts about solving trigonometric equations.

A polynomial p(x) has a double root if p and its derivative p‘ are both zero somewhere. The discriminant of p is the resultant of p and p’.

The resultant of two polynomials is a determinant of their Sylvester matrix. This matrix is easier to describe by example than by equation. You basically fill a matrix with shifts of the coefficients of both polynomials and fill in the gaps with zeros.

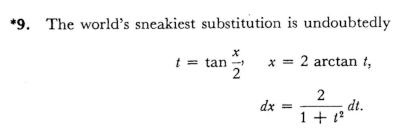

MathWorld gives the following Mathematica code for the Sylvester matrix of two inputs.

SylvesterMatrix1[poly1_, poly2_, var_] :=

Function[{coeffs1, coeffs2}, With[

{l1 = Length[coeffs1], l2 = Length[coeffs2]},

Join[

NestList[RotateRight, PadRight[coeffs1,

l1 + l2 - 2], l2 - 2],

NestList[RotateRight, PadRight[coeffs2,

l1 + l2 - 2], l1 - 2]

]

]

][

Reverse[CoefficientList[poly1, var]],

Reverse[CoefficientList[poly2, var]]

]

Cubic discriminant

If we apply this to the cubic polynomial

we get the following matrix.

We can compute the resultant by taking the determinant of the above matrix.

g[x_] := a x^3 + b x^2 + c x + d

SylvesterMatrix1[g[x], D[g[x], x], x]

We get the following result

and we can verify that this is the same result we would get from calling the Resultant directly with

Resultant[g[x], D[g[x], x], x]

Although the resultant is defined in terms of a determinant, that doesn’t mean that resultants are necessarily computed by computing determinants. The Sylvester matrix is a very special matrix, and there are clever ways to exploit its structure to create more efficient algorithms.

Each term in the resultant has a factor of a, and the discriminant is the resultant divided by −a.

Quartic discriminant

Now let’s repeat our exercise for the quartic. The Sylvester matrix for the quartic polynomial

and its derivative is

I created the image above with the following Mathematica code.

f[x_] := a x^4 + b x^3 + c x^2 + d x + e

TeXForm[SylvesterMatrix1[f[x], D[f[x], x], x]]

If we take the determinant, we get the resultant, but it’s a mess.

Again each term has a factor of a, so we can divide by a. to get the discriminant.

If we want to use this in code, we can have Mathematica export the expression in C code using CForm. To generate Python code, it’s more convenient to use FortranForm since Python like Fortran uses ** for exponents.

The following Python code was created by pasting the output of

FortranForm[Resultant[f[x], D[f[x], x], x]]

and making it into a function.

def quartic_resultant(a, b, c, d, e):

return a*b**2*c**2*d**2 - 4*a**2*c**3*d**2 - 4*a*b**3*d**3 + 18*a**2*b*c*d**3 \

- 27*a**3*d**4 - 4*a*b**2*c**3*e + 16*a**2*c**4*e + 18*a*b**3*c*d*e \

- 80*a**2*b*c**2*d*e - 6*a**2*b**2*d**2*e + 144*a**3*c*d**2*e \

- 27*a*b**4*e**2 + 144*a**2*b**2*c*e**2 - 128*a**3*c**2*e**2 \

- 192*a**3*b*d*e**2 + 256*a**4*e**3

Let’s try this on a couple examples. First

which has a double root at 0.

As expected

quartic_resultant(1, -5, 6, 0, 0)

returns 0.

Next let’s try

The call

quartic_resultant(1, -10, 35, -50, 24)

returns 144. We expected a non-zero result since our polynomial has distinct roots at 1, 2, 3, and 4.

Higher order discriminants

In general the discriminant of an nth degree polynomial is the resultant of the polynomial and its derivative, up to a constant. There’s no need to worry about this constant if you’re only concern is whether the discriminant is zero. To get the exact discriminant, you divide the resultant by the leading coefficient of the polynomial and adjust the sign.

The sign convention is a little strange. If you look back at the examples above, we divided by −a in the cubic case but we divided by a in the quartic case. You might reasonably guess that you should divide by a and multiply by (−1)n. But that won’t give you right sign for the quadratic case. The conventional sign is

(−1)n(n − 1)/2.

So when n equals 2 or 3 we get a negative sign, but when n equals 4 we don’t.

Related posts