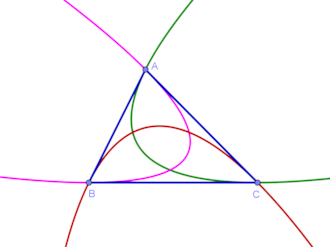

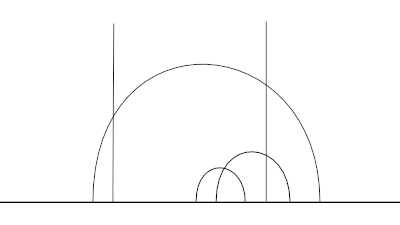

Suppose you have an arc a, a portion of a circle of radius r, and you know two things: the length c of the chord of the arc, and the length b of the chord of half the arc, illustrated below.

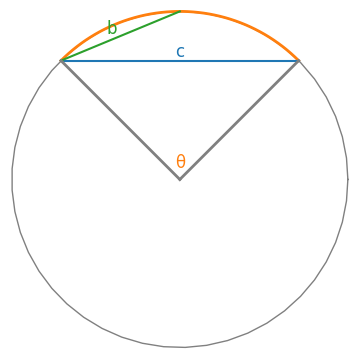

Here θ is the central angle of the arc. Then the length of the arc, rθ, is approximately

a = rθ ≈ 12 b²/(c + 4b).

If the arc is moderately small, the approximation is very accurate.

This approximation is simple, accurate, and not obvious, much like the one in this post

Derivation

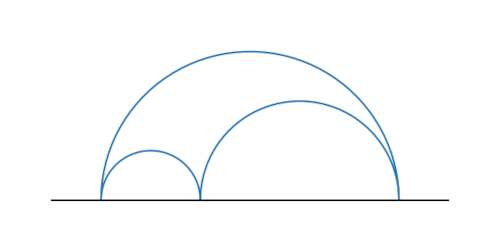

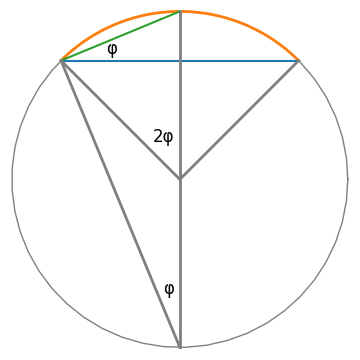

Let φ = θ/4. Then the angle between the chords b and c is φ. This follows from the inscribed angle theorem, illustrated below.

There are two right triangles in the diagram above that have an angle φ: a smaller triangle with hypotenuse b and a larger triangle with hypotenuse 2r. From the smaller triangle we learn

cos(φ) = c / 2b

and from the larger triangle we learn

sin(φ) = b / 2r.

Now expand in power series.

c / 2b = cos(φ) = 1 − φ2/2! + φ4/4! − …

2b / a = sin(φ) / φ = 1 − φ2/3! + φ4/5! − …

If we multiply 2b / a by 3 and subtract c / 2b then the φ2 terms cancel out and we get

6b / a − c / 2b = 2 − φ4/60 + …

and so

6b / a − c / 2b ≈ 2

to a very high degree of accuracy when φ is small. The approximation follows by solving for a.

Example

Let θ = π/3 and so φ = 0.26…, not a particularly small value of φ, but small enough for the approximation to work well.

Set r = 1 so a = θ. Then

b = 2 sin(π/12) = 0.51764

and

c = 2b cos(π/12) = 1.

Now in application, we know b and c, not θ, and so pretend we measured b = 0.51764 and c = 1. Then we would approximate a by

12b²/(c + 4b) = 1.04718

while the exact value is 1.04720. Unless you can measure lengths to more than four significant figures, the approximation may has well be exact because approximation error would be less than measurement error.

[1] J. M. Bruce. Approximation to a Circular Arc. The American Mathematical Monthly. Vol. 49, No. 3 (March 1942), pp. 184–185