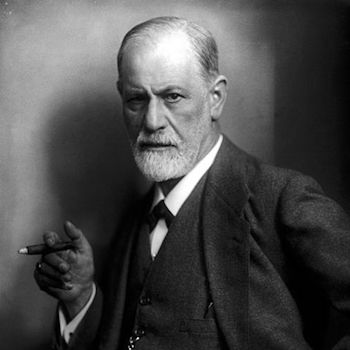

In his paper Mindless statistics, Gerd Gigerenzer uses a Freudian analogy to describe the mental conflict researchers experience over statistical hypothesis testing. He says that the “statistical ritual” of NHST (null hypothesis significance testing) “is a form of conflict resolution, like compulsive hand washing.”

In Gigerenzer’s analogy, the id represents Bayesian analysis. Deep down, a researcher wants to know the probabilities of hypotheses being true. This is something that Bayesian statistics makes possible, but more conventional frequentist statistics does not.

The ego represents R. A. Fisher’s significance testing: specify a null hypothesis only, not an alternative, and report a p-value. Significance is calculated after collecting the data. This makes it easy to publish papers. The researcher never clearly states his hypothesis, and yet takes credit for having established it after rejecting the null. This leads to feelings of guilt and shame.

The superego represents the Neyman-Pearson version of hypothesis testing: pre-specified alternative hypotheses, power and sample size calculations, etc. Neyman and Pearson insist that hypothesis testing is about what to do, not what to believe. [1]

I assume Gigerenzer doesn’t take this analogy too seriously. In context, it’s a humorous interlude in his polemic against rote statistical ritual.

But there really is a conflict in hypothesis testing. Researchers naturally think in Bayesian terms, and interpret frequentist results as if they were Bayesian. They really do want probabilities associated with hypotheses, and will imagine they have them even though frequentist theory explicitly forbids this. The rest of the analogy, comparing the ego and superego to Fisher and Neyman-Pearson respectively, seems weaker to me. But I suppose you could imagine Neyman and Pearson playing the role of your conscience, making you feel guilty about the pragmatic but unprincipled use of p-values.

* * *

[1] “No test based upon a theory of probability can by itself provide any valuable evidence of the truth or falsehood of a hypothesis. But we may look at the purpose of tests from another viewpoint. Without hoping to know whether each separate hypothesis is true or false, we may search for rules to govern behaviour in regard to them, in following which we insure that, in the long run of experience, we shall not often be wrong.”

Neyman J, Pearson E. On the problem of the most efficient tests of statistical hypotheses. Philos Trans Roy Soc A, 1933;231:289, 337.

typo, “this leads feeling” should be “this leads *to* feelings”.

Thanks.