According to the Python Cookbook, “Mixing Unicode and regular expressions is often a good way to make your head explode.” It is thus with fear and trembling that I dip my toe into using Unicode with Greek and Hebrew.

I heard recently that there are anomalies in the Hebrew Bible where the final form of a letter is deliberately used in the middle of a word. That made me think about searching for such anomalies with regular expressions. I’ll come back to that shortly, but I’ll start by looking at Greek where things are a little simpler.

Greek

Only one letter in Greek has a variant form at the end of a word. Sigma is written ς at the end of a word and σ everywhere else. The following Python code shows we can search for sigmas and final sigmas in the first sentence from Matthew’s gospel.

import re

matthew = "Κατάλογος του γένους του Ιησού Χριστού, γιου του Δαυείδ, γιου του Αβραάμ"

matches = re.findall(r"\w+ς\b", matthew)

print("Words ending in sigma:", matches)

matches = re.findall(r"\w*σ\w+", matthew)

print("Words with non-final sigmas:", matches)

This prints

Words ending in sigma: ['Κατάλογος', 'γένους']

Words with non-final sigmas: ['Ιησού', 'Χριστού']

This shows that we can use non-ASCII characters in regular expressions, at least if they’re Greek letters, and that the metacharacters \w (word character) and \b (word boundary) work as we would hope.

Hebrew

Now for the motivating example. Isaiah 9:6 contains the word “לםרבה” with the second letter (second from right) being the final form of Mem, ם, sometimes called “closed Mem” because the form of the letter ordinarily used in the middle of a word, מ, is not a closed curve [1].

Here’s code to show we could find this anomaly with regular expressions.

# There are five Hebrew letters with special final forms.

finalforms = "\u05da\u05dd\u05df\u05e3\u05e5" # ךםןףץ

lemarbeh = "\u05dc\u05dd\u05e8\u05d1\u05d4" # לםרבה

m = re.search(r"\w*[" + finalforms + "\w+", lemarbeh)

if m:

print("Anomaly found:", m.group(0))

As far as I know, the instance discussed above is the only one where a final letter appears in the middle of a word. And as far as I know, there are no instances of a non-final form being used at the end of a word. For the next example, we will put non-final forms at the end of words so we can find them.

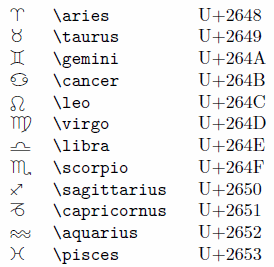

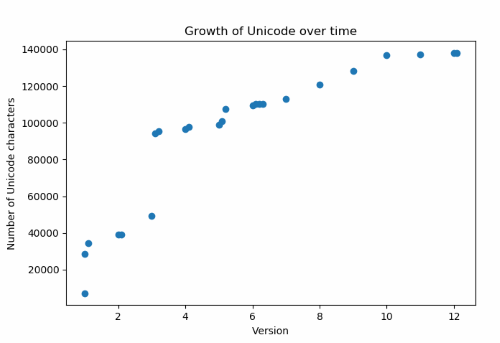

We’ll need a list of non-final forms. Notice that the Unicode value for each non-final form is 1 greater than the corresponding final form. It’s surprising that the final forms come before the non-final forms in numerical (Unicode) order, but that’s how it is. The same is true in Greek: final sigma is U+03c2 and sigma is U+03c3.

finalforms = "\u05da\u05dd\u05df\u05e3\u05e5" # ךםןףץ

nonfinal = "\u05db\u05de\u05e0\u05e4\u05e6" # כמנפצ

We’ll start by taking the first line of Genesis

genesis = "בראשית ברא אלהים את השמים ואת הארץ"

and introduce errors by replacing each final form with its corresponding non-final form. The code uses a somewhat obscure feature of Python and is analogous to the shell utility tr.

genesis_wrong = genesis.translate(str.maketrans(finalforms, nonfinal))

(The string method translate does what you would expect, except that it takes a translation table rather than a pair of strings as its argument. The maketrans method creates a translation table from two strings.)

Now we can find our errors.

anomalies = re.findall(r"\w+[" + nonfinal + r"]\b", genesis_wrong)

print("Anomalies:", anomalies)

This produced

Anomalies: ['אלהימ', 'השמימ', 'הארצ']

Note that Python printed the letters left-to-right.

Vowel points

The text above did not contain vowel points. If the text does contain vowel points, these are treated as letters between the consonants.

For example, the regular expression “בר” will not match against “בְּרֵאשִׁית” because the regex engine sees two characters between ב and ר, the dot (“dagesh”) inside ב and the two dots (“sheva”) underneath ב. But the regular expression “ב..ר” does match against “בְּרֵאשִׁית”.

See footnote [1].

Python

I started this post with a warning from the Python Cookbook that mixing regular expressions and Unicode can cause your head to explode. So far my head intact, but I’ve only tested the waters. I’m sure there are dragons in the deeper end.

I should include the rest of the book’s warning. I used the default library re that ships with Python, but the authors recommend if you’re serious about using regular expressions with Unicode,

… you should consider installing the third-party regex library which provides full support for Unicode case folding, as well as a variety of other interesting features, including approximate matching.

Related posts

[1] When I refer to “בר” as a regular expression, I mean the character sequence ב followed by ר, which your browser will display from right to left because it detects it as characters from a language written from right to left. Written in terms of Unicode code points this would be “\u05d1\u05e8”, the code point for ב followed by the code point for ר.

Similarly, the second regular expression could be written “\u05d1..\u05e8”. The two periods in the middle are just two instances of the regular expression symbol that matches any character. It’s a coincidence that dots (periods) are being used to match dots (the dagesh and the sheva).