There are two techniques in software development that have an almost gnostic mystique about them: monads and macros.

Pride and pragmatism

As with everything people do, monads and macros are used with mixed motives, for pride and for pragmatism.

As for pride, monads and macros have just the right barrier to entry: high enough to keep out most programmers, but not so high as to be unsurmountable with a reasonable amount of effort. They’re challenging to learn, but not so challenging that you can’t show off what you’ve learned to a wide audience.

As for pragmatism, both monads and macros can be powerful in the right setting. They’re both a form of information hiding.

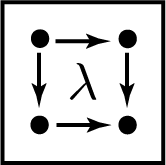

Monads let you concentrate on functions by providing a sort of side channel for information associated with those functions. For example, you may have a drawing program and want to compose a rotation and stretching. These may be ostensibly pure functions, but they also effect a log of operations that lets you undo actions. It would be burdensome to consider this log as an explicit argument passed into and returned from every operation. so you might keep this log information in a monad.

Macros let you hide the fact that your programming language doesn’t have features that you would like. Operator overloading is an example of adding the appearance of a new feature to a programming language. Macros take this much further, for better or for worse. If you think operator overloading is good because of its potential to clarify code, you’ll like macros. If you think operator overload is bad because of its potential for misuse, you definitely won’t like macros.

Mutually exclusive

Few people are excited about both monads and macros; only one person that I know comes to mind.

Monads and macros appeal to opposite urges: the urge to impose rules and the urge to get around rules. There is a time for both, a time to build structure and a time to tear structure down.

Monads are most popular in Haskell, and macros in Lisp. These are very different languages and their communities have very different values [1].

The ideal Haskell program has such rigid structure that only correct code will compile. The ideal Lisp program is so flexible that it is essentially a custom programming language.

A Haskell programmer would like to say that a program is easy to reason about because of its mathematical structure. A Lisp programmer would like to say a program is easy to read because it maps well onto the problem at hand.

Lisp enthusiast Doug Hoyte says in his book Let Over Lambda

As we now know, nobody truly understands macros.

A Haskell programmer would find this horrifying, but a Lisp programmer would consider it an acceptable price to pay or even something fun to explore.

Related posts

[1] Here I’m referring to archetypes, generalizations but not exaggerations. Of course no language community is monolithic, and an individual programmer will have different approaches to different tasks. But as a sweeping generalization, Haskell programmers value structure and Lisp programmers value flexibility.