Take a permutation of the numbers 1 through n² and lay out the elements of the permutation in a square. We will call a permutation a magic permutation if the corresponding square is a magic square. What is the probability that a permutation is a magic permutation? That is, if you fill a grid randomly with the numbers 1 through n², how likely are you to get a magic square?

For example, we could generate a random permutation in Python and see whether it forms a magic square.

>>> import numpy as np

>>> np.random.permutation(9).reshape(3,3)

array([[4, 7, 3],

[2, 6, 5],

[8, 0, 1]])

The first row adds up to 14 and the second row adds up to 13, so this isn’t a magic square. We could write a script to do this over and over and check whether the result is a magic square.

The exact number of magic squares of size n is known for n up to 5, and we have Monte Carlo estimates for larger values of n.

The number of unique magic squares, modulo rotations and reflections, of size 1 through 5 is

1, 0, 1, 880, 275305224.

For n > 2 the total number of magic squares, counting rotations and reflections as different squares, is 8 times larger than the numbers above. This is because the group of rotations and reflections of a square, D4, has 8 elements.

The probability that a permutation of the numbers 1 through 9, arranged in a square, gives a magic square is 8/9!. The corresponding probability for the numbers 1 through 16 is 8 × 880/16!, and for the numbers 1 through 25 we have 8 × 275305224/25!.

|---+-------------|

| n | Prob(magic) |

|---+-------------|

| 3 | 2.20459e-05 |

| 4 | 3.36475e-10 |

| 5 | 1.41990e-16 |

|---+-------------|

It looks like the exponents are in roughly a linear progression, so maybe you could fit a line fairly well to the points on a logarithmic scale. In fact, empirical studies suggest that the probability that a permutation of the first n² positive integers is magic decreases a little faster than exponentially.

We could fit a linear regression to the logs of the numbers above to come up with an estimate for the result for n = 6. We expect the estimate could be pretty good, and likely an upper bound on the correct answer. Let’s see what actually happens with a little R code.

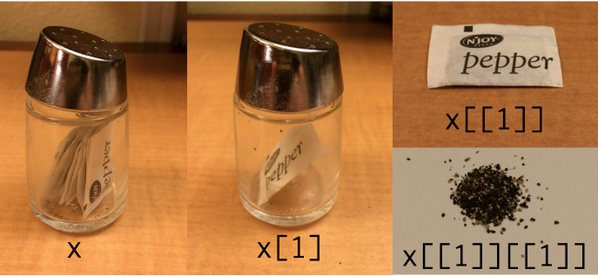

> x <- c(3,4,5)

> y <- 8*c(1/factorial(9), 880/factorial(16), 275305224/factorial(25))

> m <- lm(log(y) ~ x)

> predict(m, data.frame(x=c(6)))

1

-48.77694

This says we’d estimate that the natural log of the probability that a permutation of the first 6² positive integers is magic is -48.77694. So the estimate of the probability itself is

exp(-48.77694) = 6.55306 × 10-22

We don’t know the number of magic squares of size n = 6, but the number of distinct squares has been estimated [1] at

(0.17745 ± 0.00016) × 1020

and so the total number including rotations and reflections would be 8 times higher. This says we’d expect our probability to be around

8 × 0.17745 × 1020 / 36! = 3.18162 × 10-22

So our estimate is off by about a factor of 2, and as predicted it does give an upper bound.

Looking back at our regression model, the slope is -12.88 and the intercept is 28.53. This suggests that an upper bound of the probability of a permutation of size n² being magical is

exp(28.53 – 12.88 n).

In closing I’d like to point out that the estimate for n = 6 that we’re referencing above did not come from simply permuting 36 integers over and over and counting how many of the permutations correspond to magic squares. That would take far too long. It’s quite likely that after the first billion billion tries you would not have seen a magic permutation. To estimate such a small number to four significant figures requires a more sophisticated Monte Carlo procedure.

Related posts

[1] See OEIS sequence A006052