A basic question to ask about a network is which nodes are most important. A simple way of answering this question would be to say the most well-connected nodes, i.e. the nodes with the most edges. This approach is known as degree centrality. It’s not a bad place to start. It’s easy to understand and easy to compute. It may be adequate for some purposes, but it’s often helpful to take a more sophisticated approach known as eigenvector centrality.

Examples

Let’s look at a few motivating examples before we get into the details.

Social network marketing

If you wanted to advertise something, say a book you’ve written, and you’re hoping people will promote it on Twitter. Would you rather get a shout out from someone with more followers or someone with less followers? All else being equal, more followers is better. But even better would be a shout out from someone whose followers have a lot of followers.

Deans and flight attendants

Suppose you’re at a graduation ceremony. Your mind starts to wander after the first few hundred people walk across the stage, and you start to think about how a cold might spread through the crowd. The dean handing out diplomas could be a superspreader because he’s shaking hands with everyone as they receive their diplomas. But an inconspicuous parent in the audience may also be a superspreader because she’s a flight attendant and will be in a different city every day for the next few days. And not only is she a traveler, she’s in contact with travelers.

Search engines

Ranking web pages according to the number of inbound links they have was a good idea because this takes advantage of revealed preferences: instead of asking people what pages they recommend, you can observe what pages they implicitly recommend by linking to them. An even better idea was Page Rank, weighing inbound links by how many links the linking pages have.

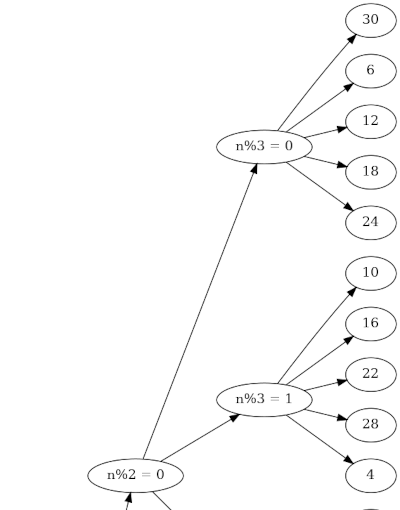

Eigenvector centrality

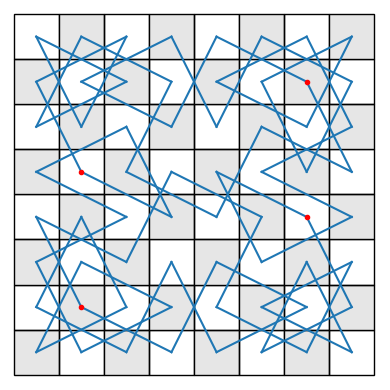

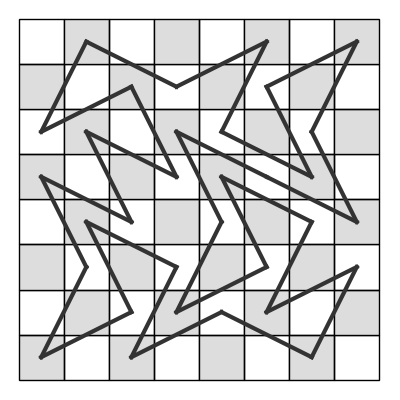

The idea of eigenvector centrality is to give each node a rank proportional to the sum of the ranks of the adjacent nodes. This may seem circular, and it kinda is.

To know the rank of a node, you have to know the ranks of the nodes linking to it. But to know their ranks, you have to know the ranks of the nodes linking to them, etc. There is no sequential solution to the problem because you’d end up in an infinite regress, even for a finite network. But there is a simultaneous solution, considering all pages at once.

You want to assign to the ith node a rank xi proportional to the sum of the ranks of all adjacent nodes. A more convenient way to express this to compute the weighted sum of the ranks of all nodes, with weight 1 for adjacent nodes and weight 0 for non-adjacent nodes. That is, you want to compute

where the a‘s are the components of the adjacency matrix A. Here aij equals 1 if there is an edge between nodes i and j and it equals 0 otherwise.

This is equivalent to looking for solutions to the system of equations

where A is the adjacency matrix and x is the vector of node ranks. If there are N nodes, then A is an N × N matrix and x is a column vector of length N.

In linear algebra terminology, x is an eigenvector of the adjacency matrix A, hence the name eigenvector centrality.

Mathematical questions

There are several mathematical details to be concerned about. Does the eigenvalue problem defining x have a solution? Is the solution unique (up to scalar multiples)? What about the eigenvalue λ? Does the adjacency matrix have multiple eigenvalues, which would mean there are multiple eigenvectors?

If A is the adjacency matrix for a connected graph, then there is a unique eigenvalue λ of largest magnitude, there is a unique corresponding eigenvector x, and all the components of x are non-negative. It is often said that this is a result of the Perron–Frobenius theorem, but there’s a little more to it than that because you need the hypothesis that the graph is connected.

The matrix A is non-negative, but not necessarily positive: some entries may be zero. But if the graph is connected, there’s a path between any node i and another node j, which means for some power of A, the ij entry of A is not zero. So although A is not necessarily positive, some power of A is positive. This puts us in the positive case of the Perron–Frobenius theorem, which is nicer than the non-negative case.

This section has discussed graphs, but Page Rank applies to directed graphs because links have a direction. But similar theorems hold for directed graphs.

Related posts