The previous post was about Kepler’s observation that the planets were spaced out around the sun the same way that nested regular solids would be. Kepler only knew of six planets, which was very convenient because there are only five regular solids. In fact, Kepler thought there could only be six planets because there are only five regular solids.

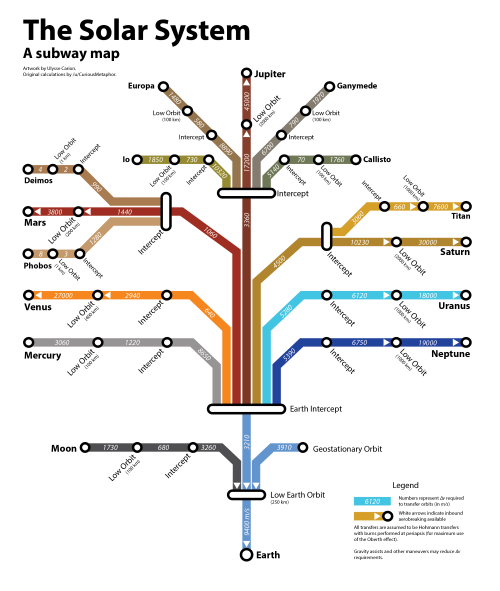

The distances to each of the planets is roughly a geometric series. Ratios of consecutive distances are between 1.3 and 3.4. That means the distances should be fairly evenly spaced on a logarithmic scale. The plot below shows that this is the case.

The plot goes beyond the six planets known in Kepler’s day and adds four more: Uranus, Neptune, Pluto, and Eris. Here I’m counting the two largest Kuiper belt objects as planets. Distances are measured in astronomical units, i.e. Earth = 1.

Update: The fit is even better if we include Ceres, the largest object in the asteroid belt. It’s now called a dwarf planet, but it was considered a planet for the first fifty years after it was discovered.

Extrasolar planets

Is this just true of our solar system, or is it true of other planetary systems as well? At the time of writing, we know of one planetary system outside our own that has 8 planets. Three other systems have around 7 planets; I say “around” because it depends on whether you include unconfirmed planets. The spacing between planets in these other systems is also fairly even on a logarithmic scale. Data on planet distances is taken from each system’s Wikipedia page. Distances are semimajor axes, not average distances.

This post just shows plots. See this follow up post for regression results.

Kepler-90

Kepler-90 is the only planetary system outside our own with eight confirmed planets that we know of.

HD 10180

HD 10180 has seven confirmed planets and two unconfirmed planets. The unconfirmed planets are included below, the 3rd and 6th objects.

HR 8832

The next largest system is HR 8832 with five confirmed planets and two unconfirmed, numbers 5 and 6 below. It would work out well for this post if the 6th object were found to be a little closer to its star.

TRAPPIST-1

TRAPPIST-1 is interesting because the planets are very close to their star, ranging from 0.01 to 0.06 AU. Also, this system follows an even logarithmic spacing more than the others.

Systems with six planets

There are currently four known systems with four planets: Kepler-11, Kepler-20, HD 40307, and HD 34445. They also appear to have planets evenly spaced on a log scale.

Like TRAPPIST-1, Kepler-20 has planets closer in and more evenly spaced (on a log scale).