I find simple approximations more interesting than highly accurate approximations. Highly accurate approximations can be interesting too, in a different way. Somebody has to write the code to compute special functions to many decimal places, and sometimes that person has been me. But somewhat ironically, these complicated approximations are better known than simple approximations.

One reason I find simple approximations interesting is that they suggest further exploration. For example, the approximation for the perimeter of an ellipse here is interesting because of the connection to r-means. There are more accurate approximations, but not more interesting approximations, in my opinion.

Another reason I find simple approximations interesting is that if they are simple enough to be memorable and easy to evaluate, they’re useful for quick mental calculations.

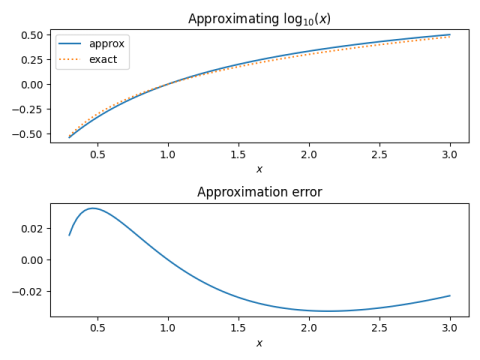

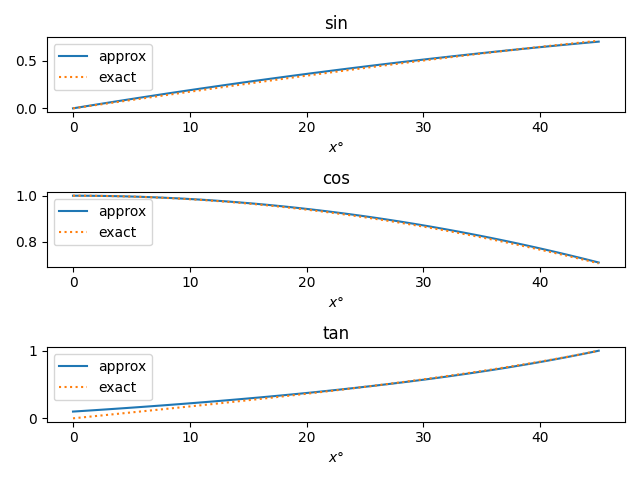

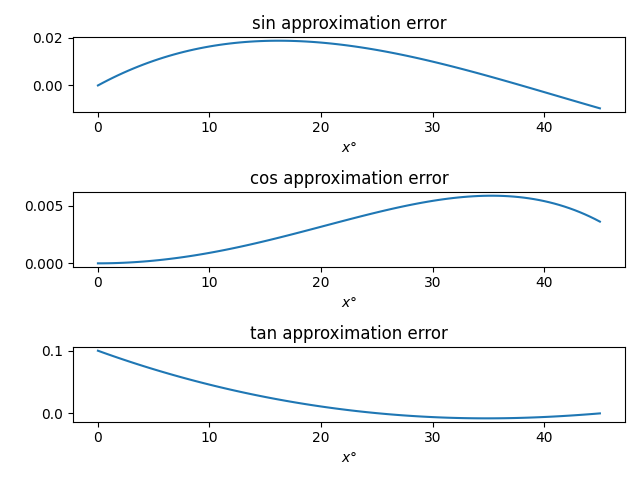

I’ve written several posts about simple approximations, and this may be the last one, at least for a while. I’ve covered trig functions, logs and exponents. The most commonly used function I haven’t discussed is the gamma function, which I cover now.

Gamma

The most important function that’s not likely to be on a calculator is the gamma function Γ(x). This function comes up all the time in probability and statistics, as well as in other areas of math.

The gamma function satisfies

Γ(x + 1) = x Γ(x),

and so you can evaluate the function anywhere if you can evaluate it on an interval of length 1. For example, the next section gives an approximation on the interval [2, 3]. We could reduce calculating Γ(4.2) to a problem on that interval by

Γ(4.2) = 3.2 Γ(3.2) = 3.2 × 2.2 Γ(2.2)

Simple approximation

It turns out that

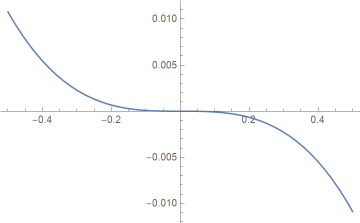

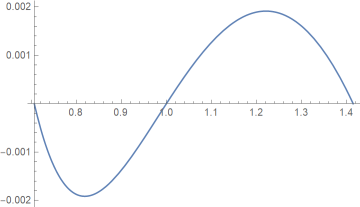

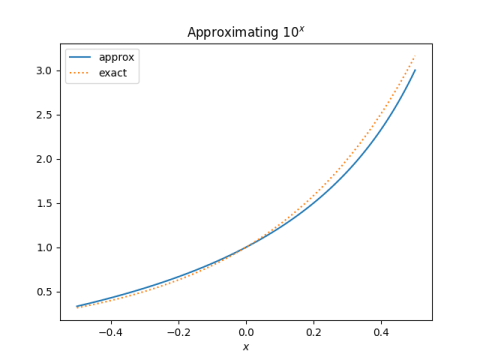

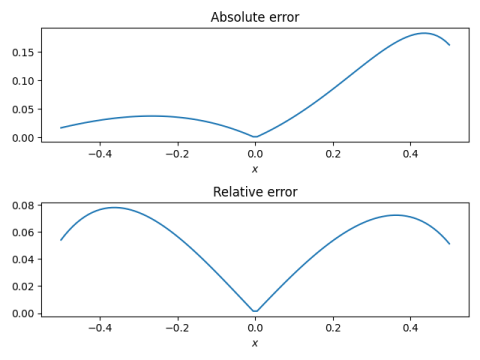

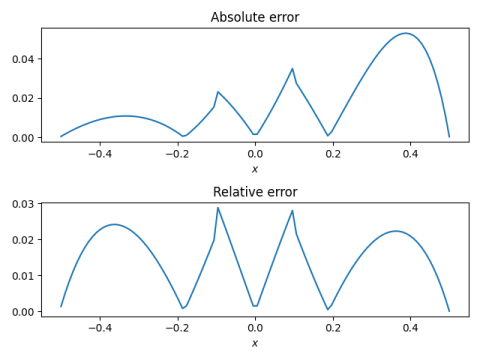

Γ(x) ≈ 2/(4 − x)

for 2 ≤ x ≤ 3. The approximation is exact on the end points, and the relative error is less than 0.9% over the interval.

An even simpler approximation over the interval [2, 3] is

Γ(x) ≈ x − 1.

It has a maximum relative error of about 13%, so it’s far less accurate, but it’s trivial to compute in your head.

Derivation

The way I found the rational approximation was to use Mathematica to find the best bilinear approximation using

MiniMaxApproximation[Gamma[x], {x, {2, 3}, 1, 1}]

and rounding the coefficients. In hindsight, I could have simply used bilinear interpolation. I did use linear interpolation to derive the approximation x − 1.