Differential privacy can be rigid and overly conservative in practice, and so finding ways to relax pure differential privacy while retaining its benefits is an active area of research. Two approaches to doing this are concentrated differential privacy [1] and Rényi differential privacy [3].

Concentrated differential privacy was used in reporting results from the 2020 US Census. Specifically, zero-concentrated differential privacy with Gaussian noise.

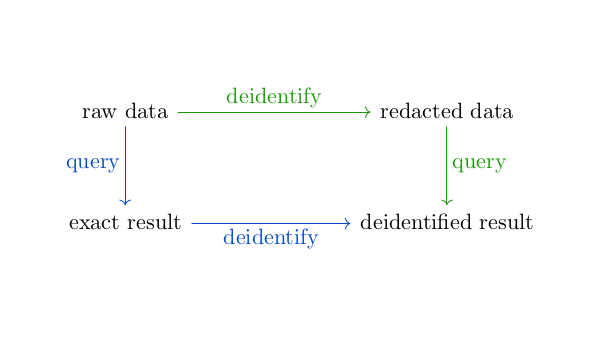

Differential privacy quantifies the potential impact of an individual’s participation or lack of participation in a database and seeks to bound the difference. The original proposal for differential privacy and the approaches discussed here differ in how they measure the difference an individual can make. Both concentrated differential privacy (CDP) and Rényi differential privacy (RDP) use Rényi divergence, though they use it in different ways.

In [3], Mirinov discusses the similarities and differences regarding CDP and RDP. (I changed Mirnov’s reference numbers to the reference numbers used here.)

The closely related work by Dwork and Rothblum [1], followed by Bun and Steinke [2], explore privacy definitions—Concentrated Differential Privacy and zero-Concentrated Differential Privacy—that are framed using the language of, respectively, subgaussian tails and the Rényi divergence. The main difference between our approaches is that both Concentrated and zero-Concentrated DP require a linear bound on all positive moments of a privacy loss variable. In contrast, our definition applies to one moment at a time. Although less restrictive, it allows for more accurate numerical analyses.

(α, ε)-RDP fixes values of α and ε and requires that the Rényi divergence of order α between a randomized mechanism M applied to two adjacent databases, databases that differ by the data on one individual, is bounded by ε.

Zero-concentrated differential privacy (zCDP) with parameters ε and ρ requires that the Rényi divergence is bounded by ε + ρα for all α in (1, ∞).

The pros and cons of zCDP and RDP are complicated. For more details, see the references below.

Related posts

- Composition theorems for differential privacy

- Comparing differential privacy to truncation

- Data privacy consulting

[1] Cynthia Dwork and Guy Rothblum. Concentrated differential privacy. CoRR, abs/1603.01887, 2016.

[2] Mark Bun, Thomas Steinke. Concentrated Differential Privacy: Simplifications, Extensions, and Lower Bounds. arXiv:1605.02065 [cs.CR], 2017

[3] Ilya Mironov. Renyi Differential Privacy. arXiv:1702.07476 [cs.CR]