The most obvious way to compute the determinant of a 2×2 matrix can be numerically inaccurate. The biggest problem with computing ad − bc is that if ad and bc are approximately equal, the subtraction could lose a lot of precision. William Kahan developed an algorithm for addressing this problem.

Fused multiply-add (FMA)

Kahan’s algorithm depends on a fused multiply-add function. This function computes xy + z using one rounding operation at the end, where the direct approach would use two.

In more detail, the fused multiply-add behaves as if it takes its the floating point arguments x, y, and z and lifts them to the Platonic realm of real numbers, calculates xy + z exactly, and then brings the result back to the world of floating point numbers. The true value of xy + z may not have an exact representation in the floating point world, and so in general it will be necessary to round the result.

The direct way of computing xy + z would behave as if it first lifts x and y to the Platonic realm, computes xy exactly, then pushes the result back down to the floating point world, rounding the result. Then it takes this rounded version of xy back up to the Platonic realm, possibly to a different value than before, and pushes z up, adds the two numbers, then pushes the sum back down to the world of floating point.

You can get more accuracy if you avoid round trips back and forth to the Platonic realm. If possible, you’d like to push everything up to the Platonic realm once, do as much work as you can up there, then come back down once. That’s what fused multiply-add does.

Kahan’s determinant algorithm

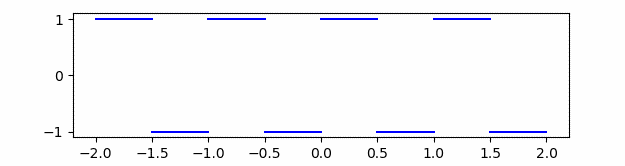

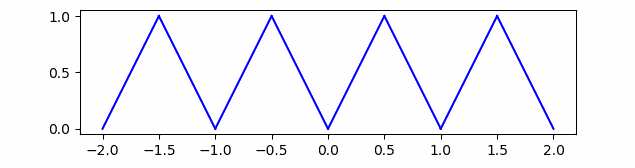

Here is Kahan’s algorithm for computing ad − bc for floating point numbers.

w = RN(bc)

e = RN(w – bc)

f = RN(ad – w)

x = RN(f + e)

The function RN computes its argument exactly, then rounds the result to the nearest floating point value, rounding to even in case of a tie. (This is the default rounding mode for compilers like gcc, so the C implementation of the algorithm will directly follow the more abstract specification above.)

If there were no rounding we would have

w = bc

e = w − bc = 0

f = ad − w = ad − bc

x = f + e = ad − bc + 0 = ad − bc

But of course there is rounding, and so Kahan’s steps that seem redundant are actually necessary and clever. In floating point arithmetic, e is not zero, but it does exactly equal w – bc. This is important to why the algorithm works.

Here is a C implementation of Kahan’s algorithm and a demonstration of how it differs from the direct alternative.

#include <math.h>

#include <stdio.h>

double naive_det(double a, double b, double c, double d) {

return a*d - b*c;

}

double kahan_det(double a, double b, double c, double d) {

double w = b*c;

double e = fma(-b, c, w);

double f = fma(a, d, -w);

return (f+e);

}

int main() {

double a = M_PI;

double b = M_E;

double c = 355.0 / 113.0;

double d = 23225.0 / 8544.0;

printf("Naive: %15.15g\n", naive_det(a, b, c, d));

printf("Kahan: %15.15g\n", kahan_det(a, b, c, d));

}

The values of c and d were chosen to be close to M_PI and M_E so that the naive computation of the determinant would less accurate. See these post on rational approximations to π and rational approximations to e.

The code above prints

Naive: -7.03944087021569e-07

Kahan: -7.03944088015194e-07

How do we know that Kahan’s result is accurate? Careful analysis of Kahan’s algorithm in [1] gives bounds on its accuracy. In particular, the absolute error is bounded by 1.5 ulps, units of least precision.

More floating point posts

- Anatomy of a floating point number

- The hardest logarithm to compute

- Summing an array of floating point numbers

[1] Further analysis of Kahan’s algorithm for the accurate computation of 2×2 determinants. Claude-Pierre Jeannerod, Nicolas Louvet, and Jean-Michel Muller. Mathematics of Computation, Vol. 82, No. 284 (October 2013), pp. 2245-2264