Suppose you have two normal random variables, X and Y, and that the variance of X is less than the variance of Y.

Let M be an equal mixture of X and Y. That is, to sample from M, you first chose X or Y with equal probability, then you choose a sample from the random variable you chose.

Now suppose you’ve observed an extreme value of M. Then it is more likely the that the value came from Y. The means of X and Y don’t matter, other than determining the cutoff for what “extreme” means.

High-level math

To state things more precisely, there is some value t such that the posterior probability that a sample m from M came from Y, given that |m| > t, is greater than the posterior probability that m came from X.

Let’s just look at the right-hand tails, even though the principle applies to both tails. If X and Y have the same variance, but the mean of X is greater, then larger values of Z are more likely to have come from X. Now suppose the variance of Y is larger. As you go further out in the right tail of M, the posterior probability of an extreme value having come from Y increases, and eventually it surpasses the posterior probability of the sample having come from X. If X has a larger mean than Y that will delay the point at which the posterior probability of Y passes the posterior probability of X, but eventually variance matters more than mean.

Detailed math

Let’s give a name to the random variable that determines whether we choose X or Y. Let’s call it C for coin flip, and assume C takes on 0 and 1 each with probability 1/2. If C = 0 we sample from X and if C = 1 we sample from Y. We want to compute the probability P(C = 1 | M ≥ t).

Without loss of generality we can assume X has mean 0 and variance 1. (Otherwise transform X and Y by subtracting off the mean of X then divide by the standard deviation of X.) Denote the mean of Y by μ and the standard deviation by σ.

From Bayes’ theorem we have

where Φc(t) = P(Z ≥ t) for a standard normal random variable.

Similarly, to compute P(C = 1 | M ≤ t) just flip the direction of the inequality signs replace Φc(t) = P(Z ≥ t) with Φ(t) = P(Z ≤ t).

The calculation for P(C = 1 | |M| ≥ t) is similar

Example

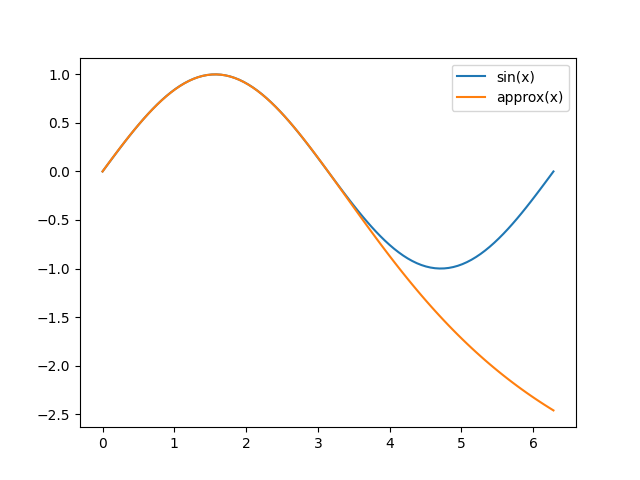

Suppose Y has mean −2 and variance 10. The blue curve shows that a large negative sample from M very likely comes from Y and the orange line shows that large positive sample very likely comes from Y as well.

The dip in the orange curve shows the transition zone where Y‘s advantage due to a larger mean gives way to the disadvantage of a smaller variance. This illustrates that the posterior probability of Y increases eventually but not necessarily monotonically.

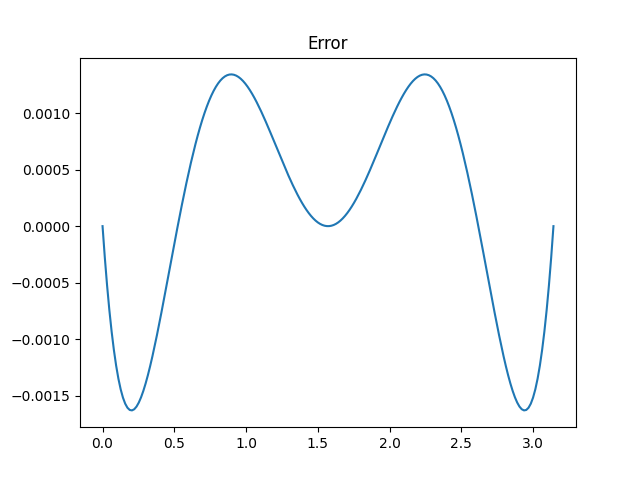

Here’s a plot showing the probability of a sample having come from Y depending on its absolute value.

Related posts