The Playfair cipher was the first encryption technique to encrypt text two letters at a time. Instead of substituting one letter for another, it substitutes one pair of letters for another pair. This makes the method more secure than a simple substitution cipher, but hardly secure by modern standards.

The Playfair cipher was used (and broken) during the first world war. I vaguely remember reading somewhere that the cipher took about an hour to break using pencil and paper. It was secure in the sense that it could be used for messages that only needed to be secure for less time than it took to break the method. It was more secure than simple substitution, and easy to encrypt and decrypt manually.

True to Stigler’s law of eponymy, the Playfair cipher was not named after its inventor, Charles Wheatstone of Wheatstone bridge fame, but after Lyon Playfair who popularized the method. Playfair acknowledged Wheatstone, but his name stuck to the method nevertheless.

Message preparation

The Playfair cipher uses a 5 × 5 grid of letters, so some letter of the Roman alphabet has to go. A common choice was to use the same letter for I and J. (A variation on the method using a 6 × 6 grid of letters and digits would not have to leave out any letters.)

For reasons that will soon be apparent, double letters had to be broken up, say with an X. So “FOOTBALL” would become “FOXOTBALXL.” Amusingly, “MISSISSIPPI” would become “MISXSISXSIPXPI.”

After eliminating Js and splitting double letters, the message is divided into pairs. So FOXOTBALXL becomes FO XO TB AL XL.

Encryption algorithm

The key for the encryption method is the arrangement of the letters in a square. In practice, the key would be some word or phrase that was used to permute the alphabet, and then that permutation was filled into the grid.

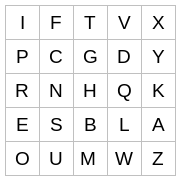

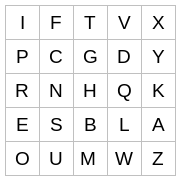

Here’s a grid I constructed by asking Python for a random permutation of the alphabet.

Given a pair of letters, the two letters either lie on the same row, the same column, or are in different rows and columns. (This is why you break up double letters.)

If the two letters lie in the same row, advance each letter one position, wrapping around if necessary. For example, IT would be encrypted as FV, and TX would be encrypted as VI.

If two letter line in the same column, proceed analogously, moving each letter down. So TH would be encrypted as GB and OI would be encrypted as IP.

Finally, if the two letters are in different rows and columns, they form the diagonal corners of a rectangle. Replace the two letters with the letters on the remaining corners. For example, IH becomes TR, HE becomes RB, GW becomes DM, etc.

Cryptanalysis

Just as you can attack a simple substitution cipher by looking at letter frequencies, you can attack a Playfair cipher by looking at bigram frequencies. You can find these frequencies for English text on Peter Norvig’s site. TH sticks out in bigram frequencies similarly to how E sticks out in letter frequencies. However, bigram frequencies are more evenly distributed than letter frequencies.

As I pointed out in the previous post, making a mapping between 676 pairs of letters to a randomly generated list of 676 other pairs of letters will not create a secure cipher. But Playfair is much weaker than such a random assignment. There is a lot of structure to the Playfair cipher. This makes it more convenient to use, and easier to break.

Suppose pairs of letters where mapped to random pairs of letters and you learn that GB is the encrypted form of TH. What have you learned about decrypting any other pair? Nothing, except that you’ve eliminated 1 out of 676 possibilities.

But if you learn that a Playfair cipher sends TH to GB, you learn that either (1) T, H. G, and B all lie in the same row or column, or (2) that T and B are in the same column, G and B are in the same column, T and G are in the same row, and H and B are in the same row.

Symmetry

If we rotate the rows or columns in our encryption matrix, nothing changes. This is easy to see in the case when two letters are in the same row or in the same column. It’s a little harder to see but still true when the letters are in different rows and columns.

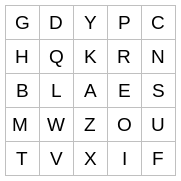

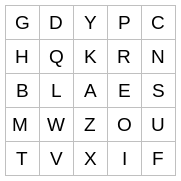

For example, consider the following encryption matrix, formed by rotating the columns two positions and the rows one position.

If you work through all the examples above, you’ll see that they remain the same. IT still goes to FV etc.

The reason rotating columns or rows doesn’t make a difference is that in matrix notation, the encryption algorithm does not depend on the subscripts per se but the difference in subscripts mod 5.

It almost doesn’t matter if you transpose the encryption matrix. If you transpose a matrix, elements that were in the same row are now in the same column and vice versa. When two letters are not in the same row or column, transposing the encryption matrix transposes the encrypted pair. In the example above HE goes to RB. If we transpose the encryption matrix, HE goes to BR.

We said above that the key to a Playfair cipher is a permutation of the alphabet. But many keys correspond to the same encryption mapping. The analyst doesn’t need to recover the original encryption matrix but only some rearrangement of it.

Related posts