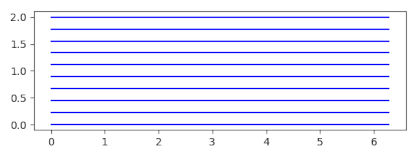

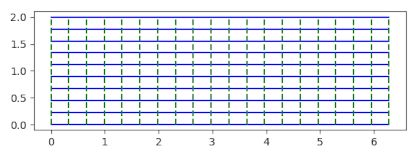

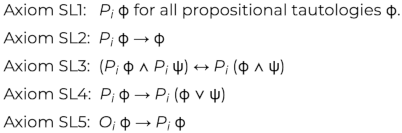

Geometric equations often involve a determinant with a column of 1s. For example, the equation of a line through two points:

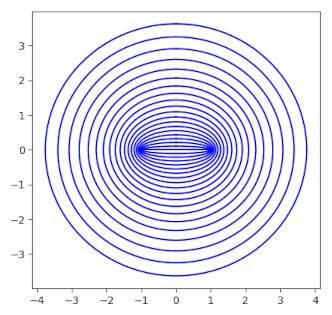

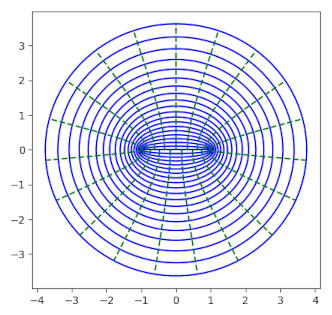

The equation of a circle through three points:

The equation of a general conic section through five points

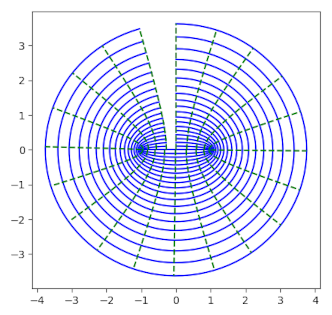

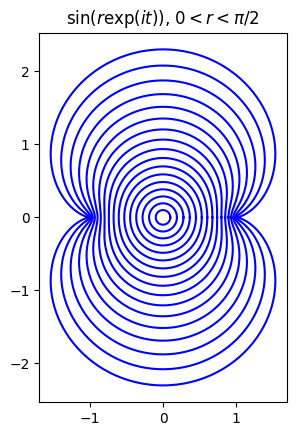

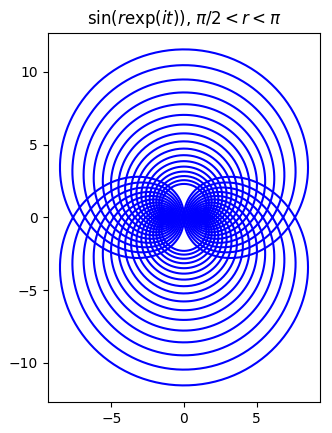

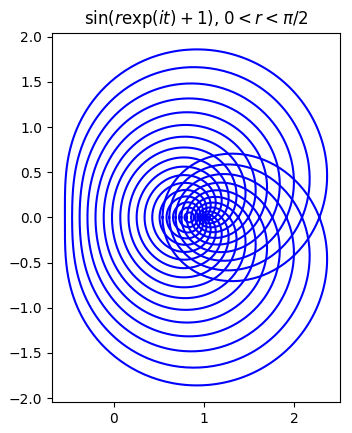

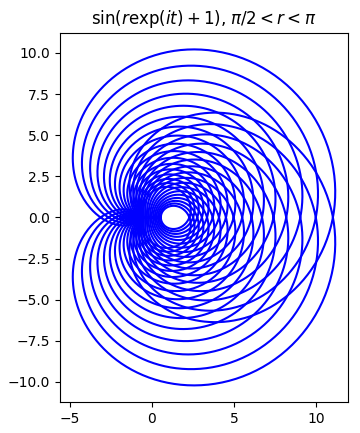

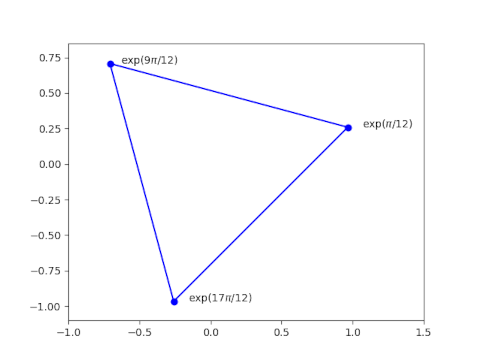

The equation of a Mobius (bilinear) transformation sending z1, z2, and z3 to w1, w2, and w3:

Why all the determinants and why all the 1s?

When you see a determinant equal to zero, you immediately think of matrix rows or columns being linearly dependent. But in the examples above it isn’t the Cartesian coordinates that are linearly dependent but projective coordinates that are dependent.

The 1s are in the last column, though they need not be, as a clue as to where they came from. You could permute the rows and columns any way you like and the determinant would still be zero. The 1s are in the last column because you can take Cartesian coordinates into projective coordinates by adding a 1 at the end.

This 1 is sort of a silent partner, and can be ignored much of the time. But the last projective coordinate is critical when it’s necessary to be rigorous about points at infinity. The examples above are interesting because they are an application of homogeneous coordinates when there’s no concern about points at infinity.