You can think of org-mode as simply a kind of markdown, a plain text file that can be exported to fancier formats such as HTML or PDF. It’s a lot more than that, but that’s a reasonable place to start.

Org-mode also integrates with source code. You can embed code in your file and have the code and/or the result of running the code appear when you export the file to another format.

Org-mode as notebook

You can use org-mode as a notebook, something like a Jupyter notebook, but much simpler. An org file is a plain text file, and you can execute embedded code right there in your editor. You don’t need a browser, and there’s no hidden state.

Here’s an example of mixing markup and code:

The volume of an n-sphere of radius r is

$$\frac{\pi^{\frac{n}{2}}}{\Gamma\left(\frac{n}{2} + 1\right)}r^n.$$

#+begin_src python :session

from scipy import pi

from scipy.special import gamma

def vol(r, n):

return pi**(n/2)*r**n/gamma(n/2 + 1)

vol(1, 5)

#+end_src

If you were to export the file to PDF, the equation for the volume of a sphere would be compiled into a image using LaTeX.

To run the code [1], put your cursor somewhere in the code block and type C-c C-c. When you do, the following lines will appear below your code.

#+RESULTS:

: 5.263789013914324

If you think of your org-mode file as primary, and you’re just inserting some code as a kind of scratch area, an advantage of org-mode is that you never leave your editor.

Jupyter notebooks

Now let’s compare that to a Jupyter notebook. Jupyter organizes everything by cells, and a cell can contain markup or code. So you could create a markup cell and enter the exact same introductory text [2].

The volume of an n-sphere of radius r is

$$\frac{\pi^{\frac{n}{2}}}{\Gamma\left(\frac{n}{2} + 1\right)}r^n$$.

When you “run” the cell, the LaTeX is processed and you see the typeset expression rather than its LaTeX source. You can click on the cell to see the LaTeX code again.

Then you would enter the Python code in another cell. When you run the cell you see the result, much as in org-mode. And you could export your notebook to PDF as with org-mode.

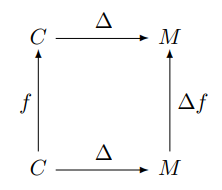

File diff

Now suppose we make a couple small changes. We want the vol(1, 5) to vol(2, 5).

Let’s run diff on the two versions of the org-mode file and on the two versions of the Jupyter notebook.

The differences in the org files are easy to spot:

1c1

< The volume of an n-sphere of radius r is

---

> The volume of an \(n\)-sphere of radius \(r\) is

12c12

< vol(1, 5)

---

> vol(2, 5)

16c16,17

< : 5.263789013914324

---

> : 168.44124844525837

However, the differences in the Jupyter files are more complicated:

5c5

< "id": "2a1b0bc4",

---

> "id": "a0a89fcf",

8c8

< "The volume of an n-sphere of radius r is \n",

---

> "The volume of an $n$-sphere of radius $r$ is \n",

15,16c15,16

< "execution_count": 1,

< "id": "888660a2",

---

> "execution_count": 2,

> "id": "1adcd8b1",

22c22

< "5.263789013914324"

---

> "168.44124844525837"

25c25

< "execution_count": 1,

---

> "execution_count": 2,

37c37

< "vol(1, 5)"

---

> "vol(2, 5)"

43c43

< "id": "f8d4d1b0",

There’s a lot of extra stuff in a Jupyter notebook. This is a trivial notebook, and more complex notebooks have more extra stuff. An apparently small change to the notebook can cause a large change in the underlying notebook file. This makes it difficult to track changes in a Jupyter notebook in a version control system.

Related posts

- Visually WYSIWYG vs functionally WYSIWYG

- The worst tool for the job

- Mixing R, Python, and Perl in 14 lines of code

[1] Before this will work, you have to tell Emacs that Python is one of the languages you want to run inside org-mode. I have the following line in my init file to tell Emacs that I want to be able to run Python, DITAA, R, and Perl.

(org-babel-do-load-languages 'org-babel-load-languages '((python . t) (ditaa . t) (R . t) (perl . t)))

[2] Org-mode will let you use \[ and \] to bracket LaTeX code for a displayed equation, and it will also let you use $$. Jupyter only supports the latter.

[3] In org-mode, putting dollar signs around variables sometimes works and sometimes doesn’t. And in this example, it works for the “r” but not for the “n”. This is very annoying, but it can be fixed by using \( and \) to enter and leave math mode rather than use a dollar sign for both.